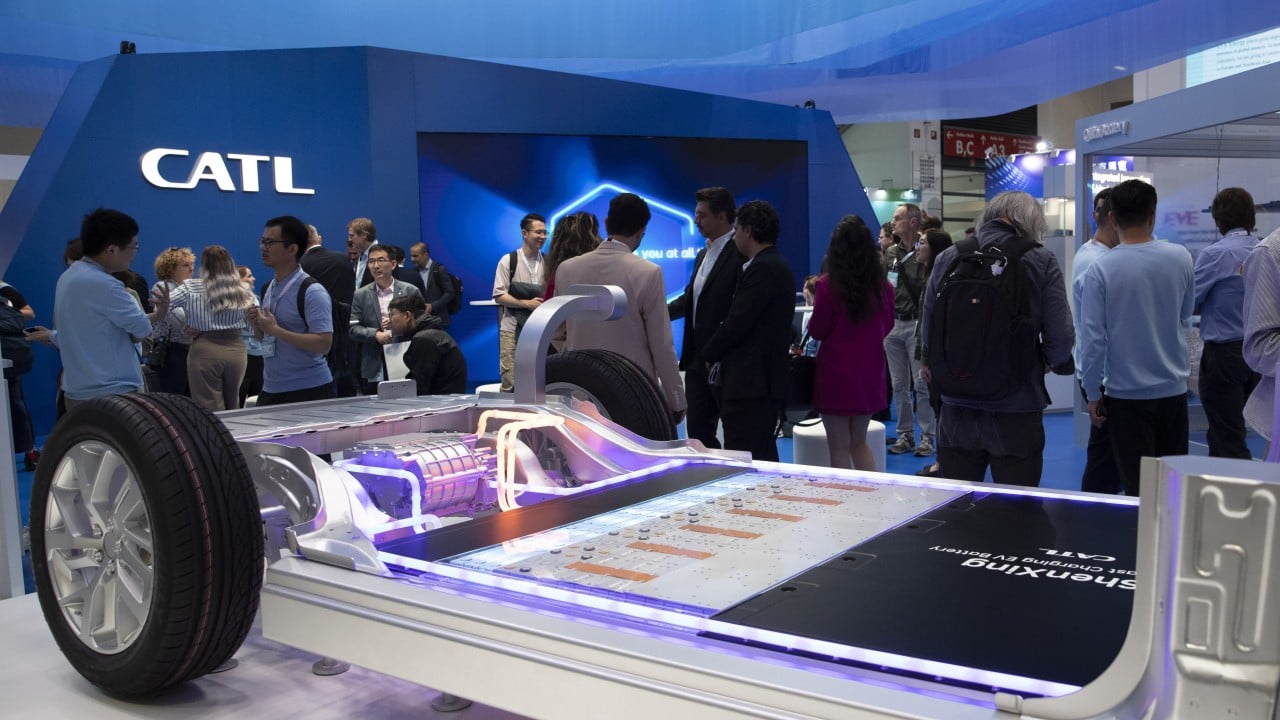

The transition of battery research from empirical trial-and-error to computational inference constitutes the most significant shift in automotive supply chain economics. When industry leaders such as Contemporary Amperex Technology Co. Limited (CATL) announce an intensified reliance on artificial intelligence in research and development, the intent is not merely incremental efficiency. It is a fundamental alteration of the cost function governing materials science.

Battery chemistry is historically constrained by the Edisonian approach: physical synthesis and testing of thousands of permutations. Each iteration requires material sourcing, electrode fabrication, cell assembly, and weeks—sometimes months—of cycle-life testing to validate stability and energy density. This bottleneck dictates the pace of innovation. By replacing physical validation with predictive models, firms bypass the temporal and capital costs of the traditional wet lab.

The Entropy Trap In Battery R&D

Traditional battery development follows a linear, high-entropy process. Researchers hypothesize a new electrolyte additive or cathode architecture. This triggers a sequence of physical experiments, most of which yield null results. The failure rate in materials discovery is high, and the feedback loop is slow.

The integration of artificial intelligence solves this via high-throughput virtual screening. Rather than synthesizing 10,000 potential lithium-ion cathode compositions, computational models utilize density functional theory (DFT) combined with machine learning to identify the top 0.1% of candidates with the highest probability of success.

The objective is to shrink the design space. This creates a shift in operational focus:

- Computational Screening: AI algorithms predict ionic conductivity, thermal stability, and electrochemical potential before a single gram of material is weighed.

- Generative Molecular Design: Neural networks propose novel molecular structures that meet specific energy density targets, essentially "inventing" materials that human chemists might overlook.

- Process Parameter Optimization: Machine learning models identify the exact temperature, pressure, and humidity settings required during manufacturing to prevent lattice defects, which directly impacts yield rates.

Quantifying The Computational Shift

The economic value of this shift lies in the reduction of "Time-to-Market" (TTM). If a traditional cycle from material conception to pilot-line validation takes 24 months, a computation-first approach aims to reduce this by 40% to 60%.

In a market where the cost per kilowatt-hour (kWh) is the primary determinant of mass-market EV adoption, reducing the R&D cycle time is a direct subsidy to the bottom line. It allows for faster iterations on battery chemistry, such as transitioning from lithium-iron-phosphate (LFP) to manganese-enriched variants or solid-state architectures, without absorbing the catastrophic costs of failed physical prototypes.

The relationship between data volume and accuracy in these models is non-linear. The more data a firm possesses regarding manufacturing defects, battery degradation, and thermal runaway, the more accurate the predictive model becomes. This creates a data-moat. CATL, commanding a significant portion of the global battery market, possesses the world's most extensive dataset of battery performance in real-world conditions. This enables them to train models on failure modes that competitors, with smaller production volumes, cannot replicate.

Operational Mechanics Of The Materials Pipeline

The deployment of AI in this sector is not monolithic. It functions through three distinct layers:

- Physics-Informed Neural Networks (PINNs): Pure machine learning often ignores the laws of thermodynamics. PINNs incorporate physical constraints—such as conservation of energy or mass transport equations—into the loss function. This ensures that the AI's predictions are physically plausible, not just statistical correlations.

- Digital Twin Fabrication: Each physical production line has a virtual counterpart. Data from sensors on the factory floor (viscosity of slurry, speed of coating, drying oven temperatures) is fed into the twin. The AI identifies which specific manufacturing variables correlate with cell longevity. If a cell fails, the digital twin traces the production history to isolate the specific parameter deviation.

- Fleet-to-Lab Feedback: The most advanced capability involves ingesting telemetry data from millions of vehicles on the road. Battery Management Systems (BMS) transmit data on charge cycles, operating temperatures, and degradation rates. This real-world data informs the next generation of chemistry, creating a closed-loop system where the product in the field directly instructs the R&D team in the lab.

The Bottleneck Of Implementation

While the theoretical advantages are absolute, operationalizing this approach introduces specific risks that firms often underestimate.

First, data quality remains the primary constraint. "Garbage in, garbage out" applies with extreme severity in computational chemistry. Inaccurate labels or sensor noise in the factory data can lead to models that optimize for false positives. A model predicting high energy density for a cathode that is chemically unstable in mass production is worse than no model at all.

Second, the talent gap is a structural issue. Battery chemistry requires domain expertise in solid-state physics and electrochemistry. Data science requires expertise in neural network architecture and linear algebra. Personnel who possess deep fluency in both domains are rare. The institutional challenge for CATL and others is not purchasing computing power, but integrating materials scientists into the software development lifecycle.

Third, the reliance on high-performance computing (HPC) creates a capital expenditure dependency. Running deep generative models for material discovery consumes massive energy and requires specialized GPU clusters. This moves the R&D expense from traditional lab equipment to cloud infrastructure and hardware costs.

Strategic Action

The competitive advantage in the battery sector is shifting from chemical synthesis capacity to data-processing velocity.

For industry participants, the required play is to invert the standard R&D model. Instead of treating software as a support tool for the lab, the lab must become a support tool for the software.

- Establish Data Provenance: Ensure every gram of material tested in the lab is logged with its production parameters. If data is not structured, it is useless for training models.

- Prioritize Model Interpretability: Black-box AI is insufficient for safety-critical components. Invest in "Explainable AI" (XAI) that allows chemists to understand why a model recommends a specific material composition.

- Pivot to Fleet-Wide Telemetry: The most valuable R&D asset is not the lab equipment but the BMS data from deployed vehicles. Secure the ability to pull granular, high-frequency data from the field. This is the only way to validate accelerated aging models against actual usage, creating a predictive accuracy that pure lab simulation cannot achieve.

The firms that win the next decade of battery production will not be those with the most PhDs in a lab, but those with the most efficient data architecture bridging the gap between the factory floor and the molecular simulator.