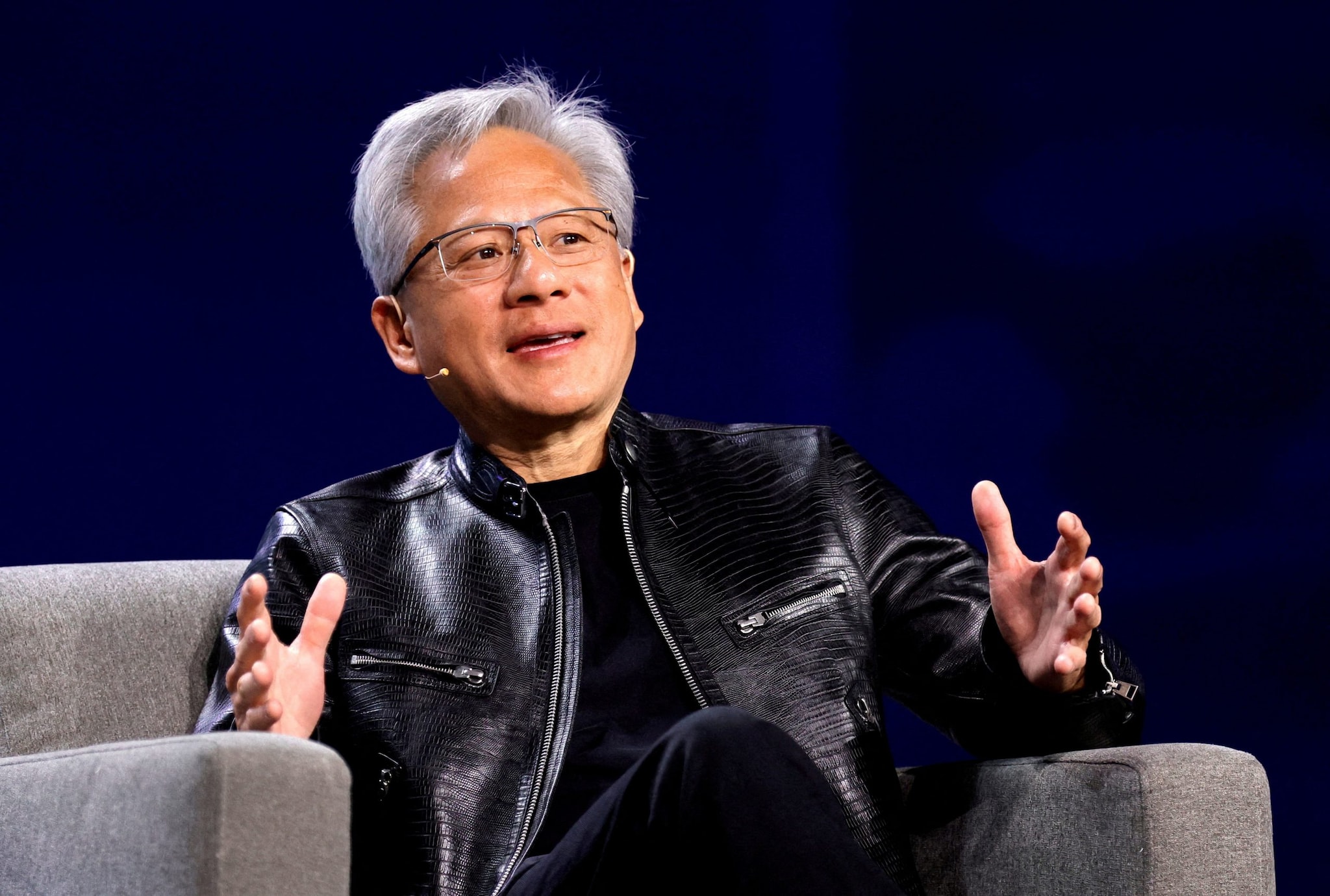

Jensen Huang walked onto a stage in a black leather jacket, looking more like a rock star than a man holding the keys to the future of our species. He wasn't there to talk about pixel counts or frame rates anymore. He was there to tell us that the finish line we’ve been sprinting toward for seventy years is already beneath our feet.

Artificial General Intelligence. AGI. The "God-like" AI. If you liked this piece, you should look at: this related article.

For decades, we treated AGI like a ghost story or a distant utopia—something for our grandchildren to worry about. We imagined a singular moment of "The Singularity," a flash of light where a computer wakes up and asks Why? But Huang, the CEO of Nvidia and the primary architect of the hardware making this possible, has a much more grounded, almost clinical definition. He believes if you give a computer every test a human can take—legal exams, medical boards, logic puzzles, high school chemistry—and it passes them all with flying colors, then for all intents and purposes, AGI is here.

It is a definition of achievement based on performance, not soul. For another perspective on this event, refer to the latest update from Mashable.

Consider a young law student named Sarah. Sarah spends three years of her life hunched over case law, drinking lukewarm coffee, and sacrificing her sleep to pass the Bar exam. It is a rite of passage. It proves she can synthesize complex information, identify nuance, and apply logic under pressure. Now, imagine a cluster of H100 chips in a cooling-submerged data center in Iowa. It doesn't drink coffee. It doesn't feel pressure. It processes the same Bar exam in seconds and scores in the 90th percentile.

Huang's point is simple: If the output is indistinguishable, does the process matter?

The Five Year Countdown

During an economic forum at Stanford University, Huang was asked the "when" question. His answer was startlingly specific. If the definition of AGI is the ability to pass a battery of human tests, he believes we will reach that milestone within five years.

Five years.

That is not "someday." That is the length of a single car loan. It is the time it takes for a middle schooler to reach their senior prom. We are talking about a transition that is happening in real-time, right under our noses, while we argue about social media algorithms and political theater.

But there is a catch. There is always a catch. Huang added a massive, heavy caveat that most of the headlines ignored. While AI can pass a Bar exam or a medical board, it still struggles with what engineers call "multi-step reasoning."

Think of it like a brilliant researcher who can memorize every medical textbook in the world but still forgets to check if the patient is allergic to penicillin before prescribing a drug. Or a chef who knows every chemical property of an onion but doesn't understand why a person might want their soup served hot on a rainy day. This lack of "common sense" or "deep reasoning" is the final frontier. It’s the difference between a calculator and a colleague.

The Hallucination Problem

We’ve all seen it. You ask a chatbot a question, and it answers with supreme confidence, citing a book that doesn't exist or a historical event that never happened. In the industry, they call this a "hallucination." In the real world, we call it a lie.

Huang is the first to admit that for AGI to be truly useful—to be something we can trust with our flight paths, our surgeries, and our nuclear grids—it has to be grounded. He argues that the solution lies in "retrieval-augmented generation." This is a fancy way of saying the AI needs to check its work against a library of facts before it speaks.

Imagine a court reporter who has a perfect memory but occasionally hallucinates a witness. Now imagine that reporter is required to cross-reference every sentence they type against a physical video recording of the room before they hit "enter." That is the bridge we are currently building. It turns a creative, sometimes delusional storyteller into a rigorous, reliable clerk.

The Great Compute Hunger

There is a physical cost to this digital evolution. To reach this five-year goal, we need an astronomical amount of "compute."

The world currently looks at the sheer number of data centers being built and feels a sense of dread. We see the power consumption. We see the billions of dollars flowing into silicon. Some analysts suggest we need dozens more "fab" plants—the massive, sterile factories where chips are born—to keep up with the demand.

Huang, however, disagrees with the gloom.

He points to a fundamental law of technology that we often forget: efficiency. In the time it takes us to build one new factory, the chips themselves become twice as powerful and twice as efficient. We aren't just building a bigger engine; we are reinventing the way fuel burns.

The stakes are invisible because they are buried in the architecture of the chips themselves. We are moving from a world of "retrieval"—where you search for information that already exists—to a world of "generation," where the computer thinks through a problem and creates a bespoke solution just for you.

The Invisible Stakes

Why does this matter to the person who isn't a computer scientist? Why should the person working a 9-to-5 or the parent saving for college care what a man in a leather jacket says at Stanford?

Because the definition of "human" is being squeezed.

If AGI is defined by the ability to pass our tests, then our tests have become a poor measure of humanity. We have spent centuries valuing the "clerical" mind—the ability to memorize, to categorize, to follow logic. Now, we are handing those keys to a machine that can do it better, faster, and cheaper.

The emotional core of this shift is a profound sense of displacement. If the AI can pass the Bar, what is the lawyer? If the AI can diagnose the tumor, what is the doctor?

Huang isn't saying the human is obsolete. He is saying the "human" part of the job is about to change. The lawyer becomes the one who understands justice and mercy—things a chip cannot feel. The doctor becomes the one who provides the touch and the empathy that makes healing possible.

But that transition isn't going to be "seamless." It's going to be a jagged, uncomfortable upheaval of how we define our value in the marketplace.

The Hall of Mirrors

We are currently living in a hall of mirrors. We look at the AI and see ourselves. We see our knowledge, our patterns, and our biases reflected back at us in high resolution. Jensen Huang is telling us that the mirror is about to become a window.

We are approaching a point where the "General" in Artificial General Intelligence means the machine is no longer a tool. It is an agent. An agent that can navigate the world of information with the same fluidity we do.

The caveats Huang mentions—the need for better reasoning, the need for factual grounding—are the only things standing between us and a world where the majority of "thinking" tasks are outsourced to silicon. These aren't just technical bugs to be patched. They are the final gatekeepers of our relevance.

The Unanswered Question

At the end of the day, Huang is a businessman selling the shovels for the biggest gold rush in history. He has every incentive to tell us that the gold is just inches beneath the soil. But even if he's off by five years, or ten, the direction of the tide is undeniable.

The real question isn't whether the AI will pass the test. It will. It probably already has.

The question is what we do when the tests are all gone. When the "human" things we used to pride ourselves on—the ability to write a brief, to calculate a structural load, to synthesize a research paper—are things a machine does for the price of a few cents of electricity.

We are moving into an era where "intelligence" is a commodity, like water or internet access. It will be everywhere. It will be cheap. It will be invisible.

And in that world, the only thing that will have any real value is the one thing Jensen Huang didn't mention on that stage. The one thing that can't be put into a test, measured by a benchmark, or manufactured in a billion-dollar fab plant in Taiwan.

The messy, irrational, unscalable, and utterly inefficient human spirit.

That leather jacket on stage wasn't just a fashion choice. It was a costume of the old world. A world of rock stars and individuals and physical presence. As Huang spoke about the arrival of AGI, he stood there as a reminder of what we are about to lose—and what we must desperately fight to define—as the machines finally learn how to think.

The clock is ticking. Five years is a heartbeat.

We should probably start thinking about what we want to be when we no longer have to be "smart."

Would you like me to analyze the specific economic impact Jensen Huang’s predictions might have on entry-level professional jobs over the next decade?