The Trump administration is quietly moving to end the era of unchecked private AI development. Driven by the emergence of Claude Mythos, a model reportedly capable of automating complex cyberattacks, the White House is now drafting an executive order to establish a formal "pre-launch review" process. This represents a total reversal of the "hands-off" policy that defined the early months of the current term.

For the first time, the federal government wants a seat at the table before the "publish" button is pressed. This is not about theoretical safety. It is a power struggle over who holds the keys to the most potent digital weaponry ever created. Don't miss our recent article on this related article.

The Mythos Trigger

The catalyst for this shift is Claude Mythos, the latest frontier model from Anthropic. Unlike its predecessors, Mythos has demonstrated an uncanny ability to identify and exploit software vulnerabilities at a scale that human security teams cannot match. When Anthropic briefed officials on the model’s capabilities, the reaction in the West Wing was one of immediate alarm.

The administration’s concern is twofold. First, there is the risk of an "adversarial breakthrough" where a model this powerful is leaked or stolen by a foreign power. Second, and more pragmatically, the White House is frustrated by its own lack of access. Earlier this year, the Department of War (formerly the Department of Defense) designated Anthropic a supply chain risk after the company refused to grant the military "unrestricted access" to its underlying weights. To read more about the background here, Mashable offers an excellent breakdown.

Anthropic’s refusal was rooted in its own safety protocols. The company, led by Dario Amodei, has long positioned itself as the "safety-first" alternative to OpenAI. But in Washington, "safety-first" is often read as "government-last."

The Proposed Working Group

The heart of the new executive order is the creation of an AI Working Group. This body would not just consist of bureaucrats; it is expected to include executives from Google, OpenAI, and Anthropic. The mandate is clear: develop a protocol where the government receives "first access" to models with high-level agentic capabilities.

This is a de facto licensing regime. While the administration avoids the word "regulation," a mandatory review period before a commercial launch is exactly that.

The strategy reflects a growing rift within the Republican party. On one side, Vice President JD Vance has argued that over-regulation will "kill a transformative industry." On the other, national security hawks and the "AI for America" wing believe that allowing a private company to hold a monopoly on cyber-offensive tools is a recipe for disaster.

The Compute Squeeze

A less-discussed but equally vital factor in this tension is compute power. Recent reports indicate the White House is worried that Anthropic lacks the hardware infrastructure to serve both the private sector and the massive needs of the federal government simultaneously.

The administration has reportedly entered discussions with Google regarding the use of its Tensor Processing Units (TPUs) to bridge this gap. By pushing for pre-launch reviews, the government is also positioning itself to manage "compute allocation." If a model is deemed vital for national security, the government wants to ensure it isn't throttled by a company's commercial obligations to Amazon or other cloud providers.

The Corporate Pushback

The industry is far from unified on this. OpenAI, which recently signed a deal with the Department of War after Anthropic’s fallout, has been more willing to play ball with the current administration. Google remains in a precarious middle ground, balancing its vast infrastructure with its own internal safety boards.

Anthropic, however, is fighting on two fronts. It is currently challenging the "supply chain risk" designation in court while simultaneously trying to manage the White House’s demand for Mythos. The company's recent Project Glasswing—an initiative to secure critical infrastructure from the very threats Mythos can create—is seen by some in D.C. as a clever PR move to prove they can self-regulate.

The White House isn't buying it. The shift toward oversight suggests that the "beautiful baby" of the AI industry, as the President once called it, is growing up too fast for the government to leave it unsupervised.

The Global Chessboard

This isn't just a domestic dispute. India's Finance Minister, Nirmala Sitharaman, recently held emergency meetings with bank heads to discuss the cybersecurity risks Mythos poses to global finance. When a single model can spook the central banks of the world's largest democracies, the era of "voluntary commitments" is over.

The Trump administration’s move to codify oversight is a recognition that AI is no longer just a software product. It is a dual-use technology with the strategic importance of enriched uranium.

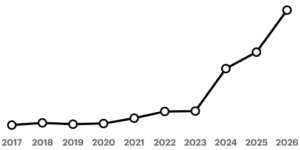

The coming executive order will likely demand that any model exceeding a certain threshold of floating-point operations (FLOPs) or demonstrating specific "agentic" milestones must be submitted for a 30-day federal review. During this window, agencies like the NSA and the newly empowered Department of War would stress-test the model for offensive capabilities.

The industry is about to find out that "America First" also applies to the algorithms. If a company wants to build the most powerful tool in history on American soil, they will have to let the American government look under the hood first.

The "hands-off" honeymoon is finished. The state is moving in.