Gallup is worried that you aren't clicking the "magic" button enough. Their recent data suggests a "troubling" stagnation in workplace AI adoption, painting a picture of a hesitant, fearful workforce clinging to the dusty relics of manual labor. They see a gap. I see a filter.

The prevailing narrative—the one being shoved down your throat by C-suite executives and McKinsey consultants—is that the "laggards" who aren't using Large Language Models (LLMs) daily are doomed to obsolescence. They call it a skills gap. They call it resistance to change.

They are wrong.

The people refusing to use AI for every mundane task aren't Luddites. They are the only ones left with a functioning "bullshit detector." We are witnessing the first mass-scale strike against subsidized mediocrity.

The Mirage of Productivity

The "lazy consensus" argues that AI adoption is a binary: you either use it and become a "super-employee," or you ignore it and become a dinosaur. This premise is fundamentally flawed because it measures activity, not value.

I have watched companies burn seven-figure budgets forcing "AI integration" into workflows that were already optimized. The result? A 30% increase in email volume and a 50% decrease in original thought. When Gallup reports that employees aren't using AI, they forget to mention that many of these employees tried it, realized it produced generic, hallucinated garbage that required more time to fact-check than to write from scratch, and made the rational decision to stop.

Real productivity isn't about how many words you can generate per minute. It is about the quality of the decisions those words provoke. If an AI helps you write a memo that nobody reads twice as fast, you haven't gained time. You’ve just accelerated the heat death of the corporate universe.

The Hidden Cost of the "Prompt Engineer"

We’ve been told that "prompt engineering" is the career path of the future. It’s actually a temporary workaround for a UI failure.

The industry insiders who actually build these models—not the ones selling them—know that the goal is to eliminate prompting entirely. Spending forty minutes "coaxing" a model to produce a specific tone is not a win. It is a labor shift. You’ve moved from being a creator to being a glorified editor of a mediocre intern’s first draft.

Smart employees have done the math. They realize that for complex, high-stakes tasks, the cognitive load of monitoring an AI for "hallucinations" (a polite term for confident lying) is higher than the load of simply doing the work.

$Cognitive Load_{Total} = Task Execution + Error Monitoring + Context Switching$

When you use AI, Task Execution might drop, but Error Monitoring and Context Switching skyrocket. For the truly elite performers, the equation doesn't balance out. They aren't "avoiding" AI; they are protecting their focus.

The Commoditization of the Average

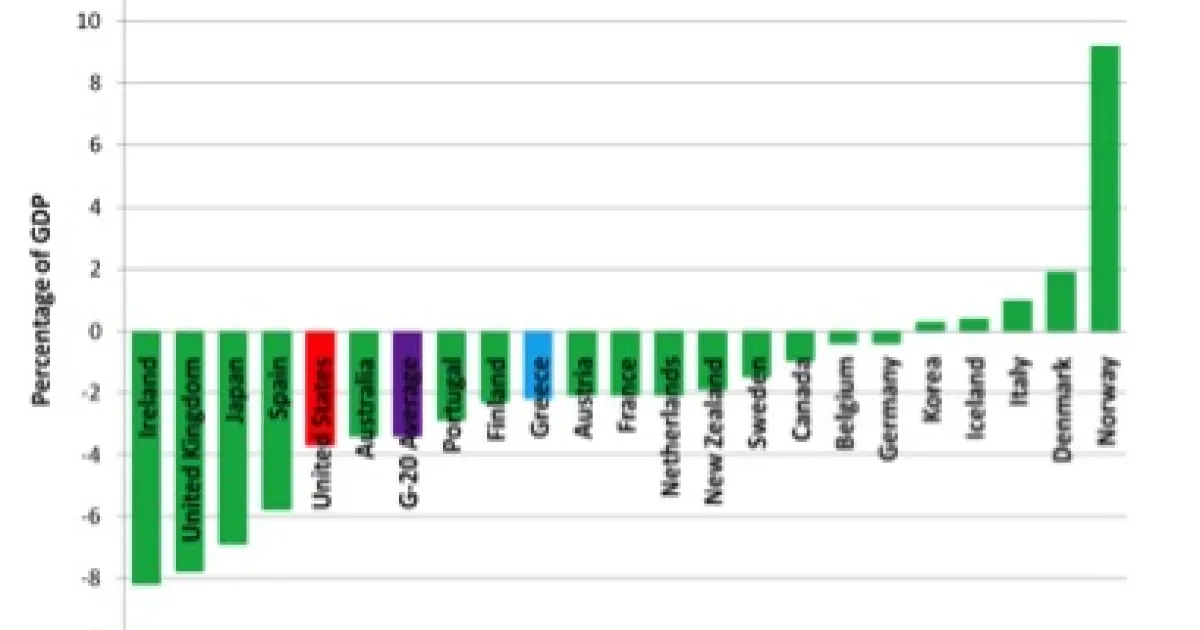

If you use AI to do your job, and your competitor uses AI to do their job, you both regress to the mean. You are both using the same training data to arrive at the same "optimal" (read: average) conclusion.

The Gallup poll results are a blessing in disguise for businesses that actually value competitive advantage. If everyone in your industry adopts AI at 100%, "average" becomes the new ceiling. The employees who are not using AI—the ones digging into primary sources, talking to humans, and doing the hard work of synthesis—are the only ones creating non-commodity value.

We are entering an era where "Human-Made" will be a luxury brand in the knowledge economy.

The Hallucination of Ease

Let’s dismantle the "Ease of Use" myth. The reason adoption is stalling isn't that the tools are hard to use; it's that the tools are deceptive.

In a high-stakes environment—legal, medical, or high-level engineering—an error rate of 5% isn't an "early-stage quirk." It’s a liability. I’ve seen a mid-sized law firm nearly lose a discovery motion because a junior associate used a popular LLM to cite cases that didn't exist. The "time saved" was instantly evaporated by the three weeks of reputational repair that followed.

The employees who are "choosing not to use" AI are often the ones who understand the stakes. They are the ones who realize that $Stochastic Parrots$ (as Emily Bender and Timnit Gebru famously categorized these models) do not possess an internal model of reality. They possess a statistical map of word associations.

- Fact: AI does not "know" things.

- Fact: AI predicts the next most likely token.

- Inference: If your job requires truth, a prediction engine is a dangerous tool.

Stop Trying to "Fix" Adoption

Management's typical response to the Gallup data is to mandate "AI Literacy" training. This is a waste of time. You cannot train someone to trust a tool that proves itself unreliable once every ten interactions.

Instead of forcing adoption, companies should be asking: "Why is our work so repetitive that a chatbot can mimic it?"

If an AI can do 80% of an employee's job, that isn't a signal to automate the employee. It’s a signal that the job has been stripped of its human agency and reduced to administrative theater. The "laggards" are often the people whose work involves too much nuance, empathy, and high-level strategy for a transformer model to grasp.

The Brutal Truth About the "AI Divide"

The divide isn't between the tech-savvy and the tech-illiterate. It’s between the Generators and the Synthesizers.

Generators love AI. They want to churn out content, code, and emails to look busy. They thrive on the volume. Synthesizers, however, are the ones who connect disparate ideas to create something new. AI is terrible at synthesis because it is fundamentally backward-looking—it can only rearrange what has already been said.

If your "adoption rates" are low, congratulations. You might actually have a workforce of Synthesizers.

The Counter-Intuitive Advice for Leadership

- Reward the "No": Ask your best performers why they don't use AI. Their answers will reveal the critical gaps in your business logic that no software can fix.

- Tax the Output: If an employee uses AI to generate a report, require them to sign off on a "Accuracy Guarantee" where they take personal, professional responsibility for every single comma. Watch how fast "adoption" drops when the accountability is real.

- Prioritize Proprietary Data: Stop feeding your team's unique insights into public models. You are training your own replacement for a few hours of saved time. It’s the ultimate short-term play.

The Gallup poll isn't a warning of a slow future. It’s a snapshot of a workforce that is starting to realize the "free lunch" of AI comes with a heavy price: the erosion of individual expertise.

Stop measuring how many people are using the tool. Start measuring who is still capable of working without it. Those are the only people you'll actually need when the hype cycle finally corrects.

Burn the prompt guides. Hire people who think for a living.